Deepfake X-Ray Warning: How AI-Generated Medical Images Threaten Healthcare Security

Medical researchers warn that AI-generated deepfake X-rays can deceive healthcare systems, posing serious risks to patient safety and medical integrity. Learn how this technology works and what safeguards are being developed.

The Invisible Threat in Medical Imaging

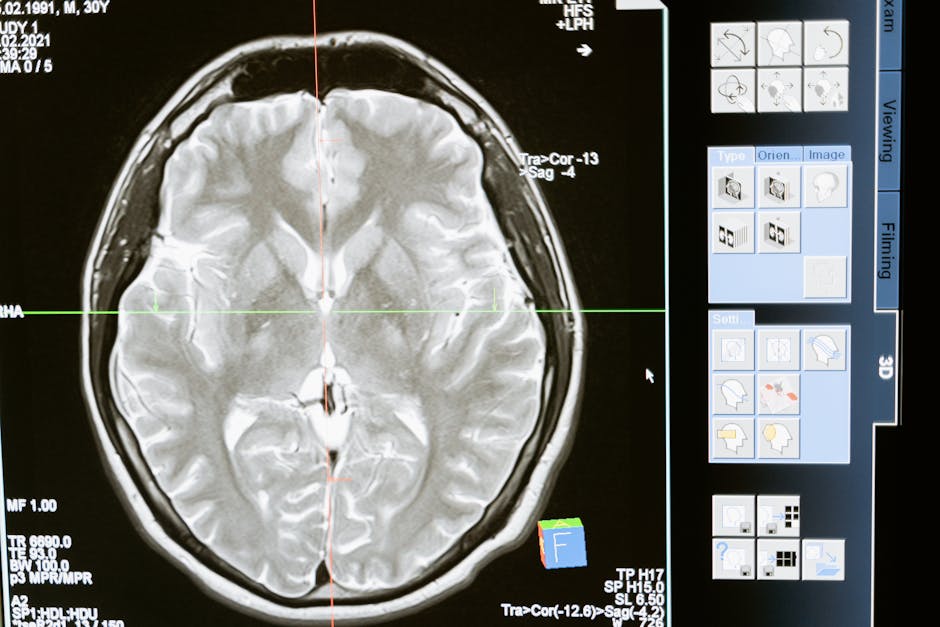

Imagine a world where your X-ray results can be fabricated with such precision that even experienced radiologists can't tell the difference. This isn't science fiction—it's the alarming reality that researchers are warning hospitals about right now. Deepfake technology, once primarily associated with fake videos and political misinformation, has now evolved to target one of our most trusted healthcare systems: medical imaging.

The Silent Invasion of Medical Deepfakes

Medical deepfakes represent a sophisticated evolution of artificial intelligence manipulation. Using advanced generative adversarial networks (GANs) and diffusion models, attackers can create convincing fake X-rays, CT scans, and MRI images that mimic real medical conditions or hide existing pathologies. These fabricated images aren't just slightly altered—they're computationally perfect simulations that can bypass traditional authentication methods.

The technology works by training AI models on vast datasets of real medical images, learning the intricate patterns of various conditions from broken bones to tumors. Once trained, these models can generate new images that show specific medical conditions on demand, complete with realistic anatomical structures and imaging artifacts that make them indistinguishable from genuine scans.

Why This Matters More Than You Think

The implications are staggering. Malicious actors could use this technology to:

- False Insurance Claims: Generate evidence of non-existent injuries for insurance fraud

- Drug Seeking: Create images showing conditions that require prescription medications

- Medical Malpractice: Plant false evidence in medical records

- Research Manipulation: Compromise medical studies with fabricated data

What makes this particularly dangerous is that medical imaging often serves as objective evidence in legal, insurance, and treatment decisions. If this foundation becomes unreliable, it could undermine entire healthcare systems.

The Technical Challenge of Detection

Detecting these deepfakes requires more than human expertise. Traditional digital forensics methods struggle because:

- Medical images undergo compression and processing that removes digital fingerprints

- The variations in legitimate medical equipment create natural inconsistencies

- Healthcare systems prioritize speed over security in image transmission

Researchers are developing AI-powered detection systems that analyze subtle statistical patterns and metadata anomalies. However, this creates an arms race where detection methods must constantly evolve against improving generation techniques.

Who Should Be Concerned

Healthcare Providers

Radiologists and medical imaging departments need to implement verification protocols and AI-assisted detection tools. The days of trusting images at face value are ending.

Insurance Companies

Claims processing must evolve to include image authentication steps, especially for high-value claims involving medical evidence.

Medical Researchers

Study protocols need additional safeguards against data contamination from fabricated images.

Policy Makers

Healthcare regulations must address digital image authentication standards and liability frameworks.

The Road Ahead: Solutions and Safeguards

The healthcare industry is responding with multi-layered approaches:

Blockchain Verification: Some institutions are experimenting with blockchain-based image provenance tracking, creating tamper-proof records of image origin and modifications.

AI Detection Systems: Specialized neural networks trained specifically on medical image deepfakes can identify subtle generation artifacts invisible to human eyes.

Hardware Authentication: New imaging equipment includes cryptographic signatures that verify the source device.

Human-AI Collaboration: Radiologists are being trained to work with AI assistants that flag suspicious images for additional verification.

This challenge particularly resonates with broader AI security concerns. As we've seen in other domains, autonomous AI auditors are becoming crucial for maintaining system integrity across various industries, including healthcare.

The Bigger Picture: AI Ethics in Healthcare

This development raises critical questions about AI ethics in medical applications. While AI offers tremendous benefits for diagnosis and treatment, it also creates new vulnerabilities. The healthcare community must balance innovation with security, ensuring that AI tools serve patients rather than threaten their care.

For more insights on how AI is transforming technology landscapes, check out Agent Arena, where we explore the cutting edge of artificial intelligence applications and their real-world implications.

The emergence of medical deepfakes serves as a wake-up call. It reminds us that as AI capabilities grow, so must our vigilance in protecting the systems we depend on for health and safety. The medical community, technology developers, and regulators must work together to build healthcare systems that leverage AI's benefits while safeguarding against its potential misuses.

Subscribe to Our Newsletter

Get an email when new articles are published.

Article Digest

🔥 Popular Now

#1

The Democratization of Software: How AI is Turning Everyone into a Developer

#2

Apple's Smart Glasses Evolution: Testing Four Designs Signals Strategic Pivot

#3

When AI Tension Spills Onto the Streets: The Molotov Attack on Sam Altman's Home and What It Means for Tech's Future

#4

CUTEv2: The Universal Matrix Engine Revolutionizing CPU Architectures with Zero Overhead

#5

Microsoft's New Enterprise Agent: The Secure Answer to OpenClaw's Risks